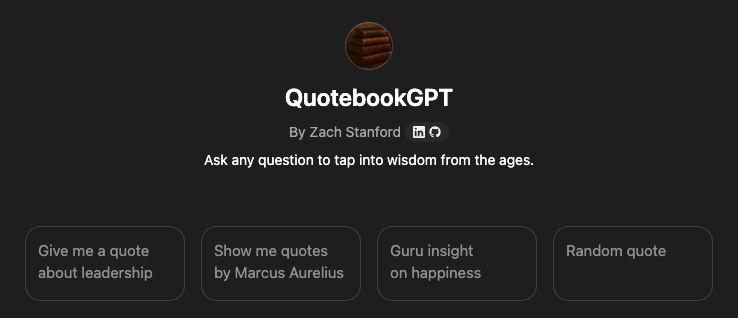

Building Custom GPTs: Context Is King

The right quote at the right time can have a profound impact. QuotebookGPT is my system for capturing and recalling insights from great books and the wisdom of others. It reads from and writes to a live Google Sheet, so the model always answers from my personal library of meaningful quotes.

The Backstory

Dave was one of my first managers when I was starting my career and he quickly became a mentor and lifelong friend. At one point he shared his personally curated quotebook with me, a collection of hundreds of snippets of wisdom he had gathered over the years. The quotes carried an extra resonance for me because Dave had infused them with his personal context, insights, and reflections.

So I kept building on what he started. Over the past decade, I have added hundreds of my own quotes from paradigm-shifting books and other sources of inspiration. Dave and I have talked about how one day we would build an app to organize our catalog of quotes and to make them instantly accessible. Naturally, when custom GPTs were announced, building a Quotebook app was my first exploratory project.

QuotebookGPT in Action

Before diving into how I built QuotebookGPT, here is an overview of what it can do.

Recall Features

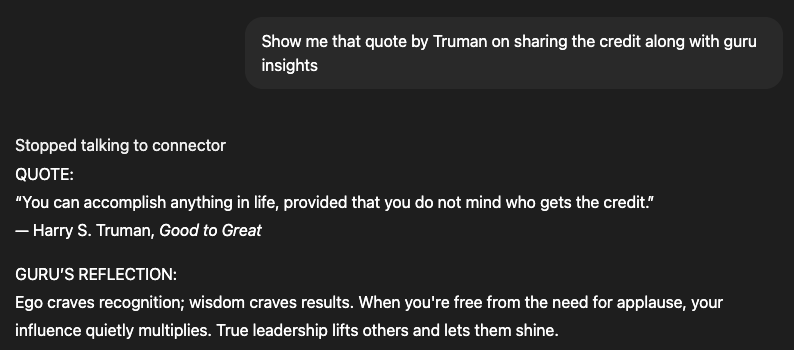

- Recall from memory cues

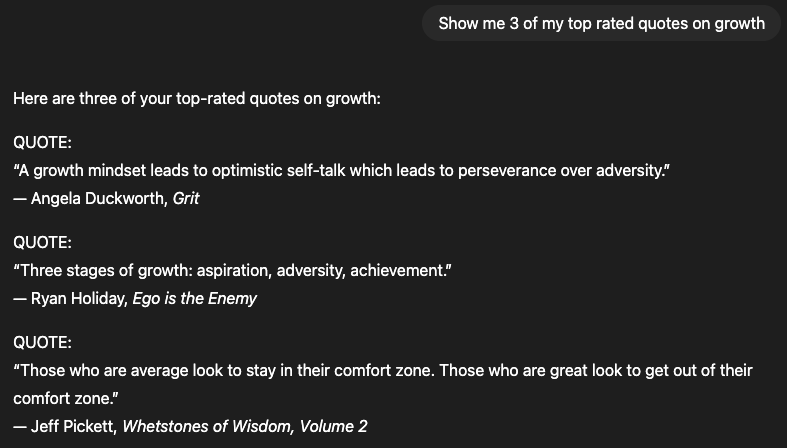

- Search by topic

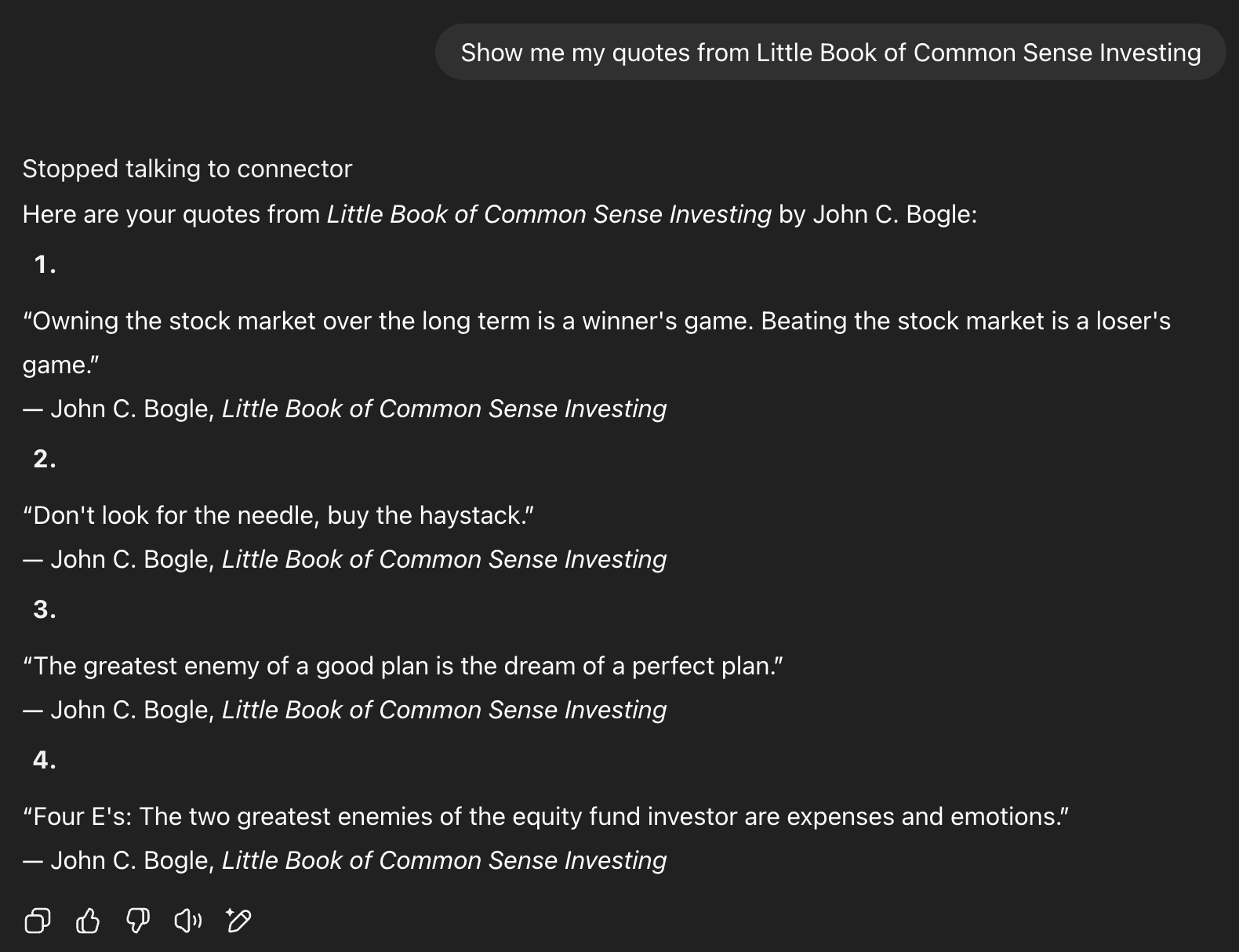

- Filter by source/book

- Use guru persona to add insight commentary for any quote

- Leave it up to chance

Capture Features

I have a habit of highlighting quotes in books and telling myself I’ll add them to my Quotebook later, but that didn’t always happen. Now the workflow is simple:

- Copy the text from Kindle into QuotebookGPT on my phone.

- Ask it to add the quote to my quotebook.

- It writes a new row to my source Google Sheet.

Iteration Log: How It Became My Daily Tool

When custom GPTs launched in early 2024, I dove in and started tinkering. It took several iterations to get this QuotebookGPT to the state it is in today where I am now deriving consistent personal value from it. Here is my iteration log of failed experiments and learnings.

V0 — Not usable

- Uploaded a static CSV of quotes and asked questions.

- Results were poor: frequent hallucinations and generic answers unrelated to my data.

- Tried Google Gems too, but found slightly better results with ChatGPT.

- Likely more indicative of the deficiencies in early large language models.

V1 — Functional but limited

- Added stricter guardrails in system instructions:

- Only source from the attached file.

- Say “no match found” when appropriate.

- Never invent or misattribute quotes.

- Accuracy improved, but hallucinations still crept in.

V2 — Current version (reliable and in daily use)

- Added read/write tools via a small Google Cloud app.

- Every query now reads directly from the live sheet, removing hallucinations.

- Added a write action so new quotes update the sheet instantly.

- Solved the biggest pain point: no more re-uploading static CSVs.

V3 — Future state (planned enhancements)

- Smarter topic search with synonyms and concept expansion.

- Lightweight embeddings for better ranking.

- Implementation of a vector store to make the input data more machine-readable.

- Privacy controls for sharing while keeping raw data safe.

Under the Hood

All the code and templates for my current version are documented in this GitHub repo1. Here is a high-level overview of the components:

- Google Sheet: Quote library with a

ReadViewsheet and anInboxsheet. - GPT instructions: The rules and guardrails for how the GPT should behave and respond to questions.

- GPT actions: Enable the Google Sheets API and add two actions: one reads from the sheet, and one writes to it.

Here is a demo where I talk through the components

How To Start Building

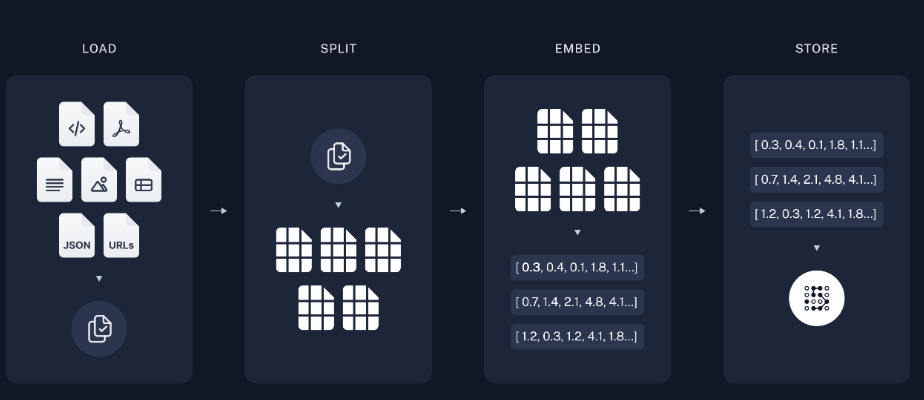

Creating a custom GPT can be as simple as uploading a static file or as complex as chunking and embedding documents through a vector store.

Because I do not have unlimited time, my rule for personal projects is Occam’s Razor:

The simplest solution is preferable to one that is more complex.

It depends on the goal, but I try to start with the simplest implementation and move up in complexity only if the use case requires it. While I focused on QuotebookGPT for this blog post, I have found success through a host of custom-built GPTs based on varying levels of complexity. Here are some examples ranging from beginner to intermediate:

1. No GPT Required

Some questions do not require custom context because the LLM can answer from pretraining. Examples include questions on coding, home maintenance, and general knowledge.

2. Persona-only GPT (No data files)

How it works: Create a Custom GPT and give it a persona or objective through text-based instructions only.

Example: SocratesGPT — simple instructions to give it the persona of Socrates with the goal of asking me questions to help me think more critically and make better decisions.

3. Custom GPT with static data files

How it works: Upload static files such as PDFs, docs, or CSVs to be used as context for your goal.

Example: BMP Strength Coach GPT — I uploaded a static PDF of a workout program with 8 levels of progressive workouts and instructed the GPT to feed me my daily workout based on where I am in the program. This works great since there is no need for me to update the static file.

4. Custom GPT with API connectors

How it works: Connect to supported APIs to pull real-time data into your GPT from external sources. This is powerful when data freshness matters.

Examples:

- QuotebookGPT — as described above, this GPT uses the Google Sheets API to read from and write to a live “database” of quotes.

- EnduroGPT — connects to the latest Strava data to give me my next recommended workout based on goals I have defined in the instructions.

- ProsperaGPT — current iteration connects to Google Drive to pull the latest information from my brokerage documents on asset allocation to recommend where I should invest next.

To get started with building Custom GPTs, check out this OpenAI guide2.

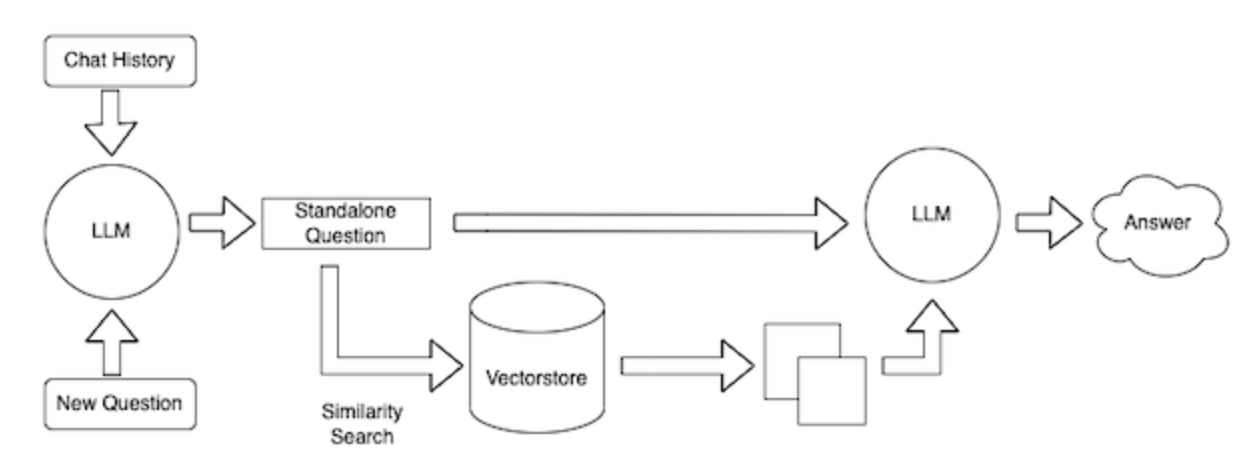

Business Application with RAG

My QuotebookGPT is a simple RAG loop. RAG stands for Retrieval-Augmented Generation and it is an important concept for building AI applications. It simply means the LLM answers with help from customized context instead of relying only on what the model was trained on3.

For example, my QuotebookGPT takes a question, searches my quotes, and feeds the best matches back into the model to produce an answer. In an enterprise data workflow, the same idea works with much more rigor and tooling. You would want:

- Clear chunking to split long context documents into useful pieces.

- High-quality embeddings that capture meaning.

- A dedicated retrieval store that is fast, secure, and observable.

This LangChain RAG tutorial summarizes the process well4.

Context Is King

In 1996, Bill Gates popularized the saying that content is king. In the age of AI, I think we need to recalibrate to the idea that context is king. The better the context you give the models, the better your results.

Context includes:

- Clear goals and concise instructions

- A trusted, domain-specific knowledge base or personal dataset

- Well-structured, machine-readable data

- Guardrails that define what “good” looks like

- A feedback loop to correct errors and improve over time

If you want better answers, bring better context. A lightweight custom GPT is a great place to start. Iterate as your needs grow.